Earlier this month, OpenAI announced the limited release of ChatGPT Health, which will allow users to pour their most intimate HIPAA-protected medical records into the world’s most powerful AI chatbot.

This is a phenomenally bad idea for reasons we’ll explore in a minute. For now, let’s hear OpenAI’s pitch:

Company officials say hundreds of millions of ChatGPT users ask health and wellness questions every week. So they designed an AI chatbot focused on those needs.

According to CNBC:

ChatGPT Health is not intended for diagnosis and treatment, and it’s not supposed to replace medical care. Rather, the experience is supposed to help users navigate everyday questions, and it aims to make ChatGPT’s responses more relevant by grounding them in a user’s own health information.

“ChatGPT Health is another step toward turning ChatGPT into a personal super-assistant that can support you with information and tools to achieve your goals across any part of your life,” said Fidji Simo, CEO of applications at OpenAI.

Why ChatGPT is so much worse than Google

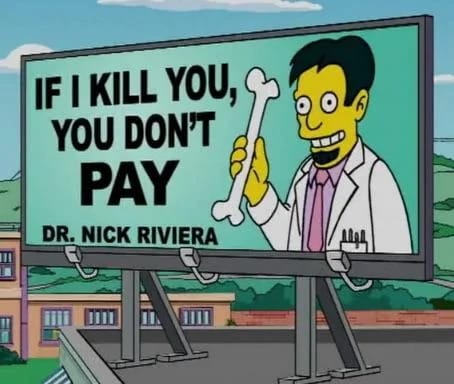

OpenAI is capitalizing on the habit we’ve all acquired: You feel something wrong, you Google the symptoms. Google—the search engine, not the “AI Overview” —won’t return a diagnosis. It will offer links to reputable websites like Mayoclinic.org or Webmd.com. This is a good thing. The Mayo Clinic’s pages on norovirus, gout, what-have-you, were professionally written and vetted by actual physicians.

An AI chatbot is a different beast. ChatGPT is programmed to speak with a friendly, intelligent voice, but the machine is nothing more than a massive pattern-matching mechanism. It scans the billions of words and sentences in its training data and replies with a probabilistic guess of which word likely belongs next to the word before or after it, within the context of the prompt.

The machine itself: Will mess you up

You’ve no doubt heard about ChatGPT’s suicide problem. Those issues are real and they are scary. I’ve read the chat logs that helped Adam Raine tie a strong noose and end his life. I don’t believe OpenAI’s hollow assurances about how they’ve “fixed” the problem.

The lawsuits filed by Raine’s parents, and by the mother of Sewell Setzer, truly reflect the tip of the iceberg.

Consider:

Seven more lawsuits against OpenAI were filed in Nov. 2025, alleging similar incidents of suicide and self-harm induced by ChatGPT.

Yesterday The New York Times published a report documenting the increasing numbers of therapy patients spiraling into delusions, psychosis, anxiety, and depression due to their interactions with AI chatbots. “It was like the AI was partnering with them in expanding or reinforcing their unusual beliefs,” said one psychologist.

Three weeks ago SF Gate documented the case of 19-year-old Sam May, who died of an overdose after ChatGPT coaching him to “go fully trippy mode.” The bot gave him specific drug doses so he would have stronger hallucinations. The SF Gate reporters noted that “none of this should have been possible, according to the rules set by OpenAI.” May’s chat logs show that the company “has lost full control of its blockbuster product.”

There’s a reason medical records are protected

I’ll hit this point briefly: It is a really, really bad idea to voluntarily offer your personal medical information to one of the world’s most powerful tech companies. Federal HIPAA laws, which guard your medical files, exist for a reason.

Your medical data can be used against you in countless ways. You could lose your insurance, lose your job, fall victim to blackmail. Any data voluntarily given to an app or a tech company is liable to be sold to third-party data brokers and peddled to bad actors. Hacks and data breaches happen to even the most secure companies.

Don’t do it.

At the same time, millions of Americans need the help

Even as I tell you all the reasons not to use ChatGPT to diagnose your recent dizzy spell, I know that millions of Americans like me desperately need the help ChatGPT Health offers.

The American healthcare system is the proven worst among all high-income countries. Even those of us fortunate enough to have health insurance must wait months to see a provider and then fight like hell to get our insurer to cover the visit.

This came home to me yesterday as I read an article about men’s sexual health.

The gist of the piece was that men don’t talk about personal plumbing problems because they’re too embarrassed. So they resort to the internet, where they find misinformation.

“I wish more young men would take the time to speak to their primary care physician about health questions rather than get their advice from AI or social media,” said Dr. Tony Chen, a urologist at Stanford Medicine in California.

That quote made my ears steam.

Dr. Chen, I wish more American men had the chance to speak to their primary care physicians. About anything, plumbing or otherwise. Many of us just saw our monthly health insurance premiums double, triple, or worse. More than 300,000 federal workers lost their health insurance along with their jobs last year. Tech workers replaced by AI are also finding themselves cut off from their doctors.

More and more of us dream of the chance to see a primary care physician.

But we can’t. So we turn to ChatGPT.

Which offers us a friendly voice, an intelligent answer, and information that sounds accurate. We’re just hoping it doesn’t go full trippy mode.

MEET THE HUMANIST

Bruce Barcott, founding editor of The AI Humanist, is a writer known for his award-winning work on environmental issues and drug policy for The New York Times Magazine, National Geographic, Outside, Rolling Stone, and other publications.

A former Guggenheim Fellow in nonfiction, his books include The Measure of a Mountain, The Last Flight of the Scarlet Macaw, and Weed the People.

Bruce currently serves as Editorial Lead for the Transparency Coalition, a nonprofit group that advocates for safe and sensible AI policy. Opinions expressed in The AI Humanist are those of the author alone and do not reflect the position of the Transparency Coalition.

Portrait created with the use of Sora, OpenAI’s imaging tool.